What is the relationship between trust and security, security and privacy, privacy and personal data protection? For some time now, I knew that there was something wrong with the so-called trust technologies, but I did not take the time to pin down what the source of the problem was. Apart from rechristening them as distrust technologies, I did not make the effort to explore any further the matter. Here are two excerpts from previous posts:

Is there an escape from an alternative that can only lead to an escalation in the development of distrust technologies? in Why Open Badges Could Kill the Desire to Learn?

One of the most interesting and undervalued features of the Open Badge Infrastructure is trust: I have commented before that there is a risk for the Open Badges’ pretty pictures to become what the proverbial tree is to the forest of trust. I’ve also written that OBI is a native trust infrastructure, while most of the so-called trust architectures would be better described as distrust architectures (in a native trust environment, trust is by default, while distrust is generated by experience; in a distrust environment distrust is by default while trust is generated by experience). in Punished by Open Badges?

Designing Principles for a (dis)Trusted Environment

What brought me to explore further the issue of trust and security was the participation at a workshop organised by the Aspen Institute at SXSWedu 2015. The participants were invited to produce a series of scenarios eliciting the design principles of a trusted [digital] environment. The workshop took place the day following a session on “Designing Principles for a Trusted Environment” during which the winners of the DML Trust Challenge were announced.

While the challenge we were invited to address was the design of a trusted environment what struck me in most of the proposed scenarios was that they did exactly the opposite: they designed an environment where distrust was the founding principle. The designing principles for a distrusted environment were:

If you have a problem with trust the solution is increased control and security measures.

While this principle might sound fine to the superficial reader, the problem is that it reveals a misconception of what trust is about and, consequently, on how to deal with situations where low levels of trust are an issue. While both trust and security are related to safety, they are at the two ends of a spectrum.

While one can take security measures, send security forces, one cannot take trust measures and send trust forces. Security is something you can do to things, trust is something you can only get from within. Mistaking one for the other, trust for security, could (and generally does) have deleterious effects on trust.

The table below provides an overview of the relationship between trust, security and safety:

| Safety | Form | Costs | Social consequences | |

| Trust | Intrinsic | Natural | None | Social inclusion and development |

| Security | Extrinsic | A product or a service | Can be expensive | Security firms have vested interests in weakening trust bonds |

About safety and security: while safe and secure are synonymous, they do not convey the exact same meaning. Safety comes from the Latin salvus ‘uninjured.’ The state of being ‘uninjured’ is a natural property, it is not something you can do to something or someone else. You can leave someone uninjured but you cannot ‘uninjure‘ someone — unless you have the power to make time flow backwards! On the other hand, security, from the Latin ‘without care,’ is something you can to do to something or someone else: you can secure a place (a prison) or secure a person (a prisoner). Securing conveys the image of protecting the inside against the outside, us vs. them — locks, fences, tear gas, barbed wire, police, army, weapons are just a small sample of the security paraphernalia. And as history keeps reminding us, security measures are not exactly conducive to making places safer!

The deleterious effects of mistaking Security for Trust

To provide an illustration of what an actual trusted environment could look like, the organisers of the workshop invited the participants to focus their attention on the risks of online addiction.

Among the scenarios proposed to address online addiction were:

- An app limiting the number of tweets per day;

- Parents collecting mobile phones before the children going to bed;

- Setting regulations and punishing those who do not respect them;

- An app limiting the time spent online, etc.

A piece of software limiting the number of tweets per day undisputedly deserves the qualification of distrust technology. The collection of mobile phones before going to bed is not exactly the demonstration of an increased level of trust between parents and children; it is just the reiteration of the asymmetrical relationship between parents and children: undisputed authority on the one hand, unconditional subservience on the other.

So while the challenge we were invited to address was how to create the conditions for trusting children to make reasonable use of online time and resources, most of the scenarios were about creating artificial barriers and enforcing the control of authorities, parental and/or educational, the sub-text being: if you want to be trusted, accept becoming subservient and do not make any attempt at hacking your mobile phone to bypass the authorised number of daily SMS imposed by our great innovation!

The scenario our group produced was rather different. It was about inviting students to theorise and design their own relationship to technology. While considering that the issue of online addiction could be a real problem, we decided that it was first and foremost a superb learning opportunity, inviting learners to lead a research project, interviewing experts to understand what the issues are, how they have been and could be addressed, letting them design a theoretical framework to frame the different options and, eventually, making the final decision on what is good for them as an empowered learning community.

The starting point of our scenario was trust, the arrival point, increased trust through a better understanding of the world we are living in together. For the other scenarios, the starting point was distrust, and the arrival point increased control and the expectation of greater subservience…

Trust and security work in reverse proportions

What the authors of the control/subservience scenarios did not realise was: increasing security measures, reinforcing the power of authorities by any order of magnitude will not increase trust by even an iota. Even worse, when trust is already low, increasing security measures is very likely to result in decreasing even further the current level of trust.

Trust and security work in reverse proportions: the more trust, the less extrinsic security measures are required, the more extrinsic security measures are taken, the less trustworthy the system becomes.

While we intuitively identify a link between trust and security, their relationship is counterintuitive. It is a gross misinterpretation to imagine that the solution to increasing trust has anything to do with increasing the level of security and control. Increasing trust, should be about… increasing trust (a tautology) rather than increasing security (a contradiction).

Moreover:

Increasing security measures is about addressing the symptoms, not the causes of failing trust. There is no alternative to increasing trust than taking the necessary steps to… increasing trust!

When security takes over, trust is on the wane

Safety is an organic and intrinsic feature of the environments characterised by high levels of trust. In environments marked by low levels of trust, security tends to grow as autonomous extrinsic features, something that can be provided by a third party. When teachers are not trusted by governments, the solution to securing that educational standards are met is increased inspection and systematic testing of pupils, when female patients do not trust male doctors, the solution is the mandatory presence of a female during physical examinations, when there are parts of the French territory where the trust in the Republic is so low that the representative of any authority, such as the police, but also firemen, emergency services and doctors are regularly attacked, the solution is the organisation of police commandos using raid techniques, as if it were a guerrilla war.

When the sense of safety and integrity provided by trusted environments is on the wane, when people feel disempowered, two main options are offered: addressing the causes by rebuilding the pre-conditions of trust and empowerment or addressing the symptoms by taking measures enforcing security. The fact that the second option is financially and socially more costly, notwithstanding that it is morally wrong, seems to be of no relevance to a number of policy makers…

Protection (of personal data) vs. Projection

The awareness of the counterintuitive relationship between trust and security goes back a few years ago (2008), when I was involved inTAS³, a forward looking research project related to trust and security. Here is the description of the project after its completion in December 2011:

The TAS³ project has researched & developed a trusted architecture and set of adaptive security services which preserve personal privacy and confidentiality in dynamic environments. Specifically, TAS³ has contributed to the design of a next generation trust & security architecture […] source.

While the project produced outstanding outcomes, yet I felt that there was something wrong with a claim putting trust and security on the same level. TAS³ did not announce the development of a secured trust architecture supporting the development of trustworthy relationships. This would have been entirely different. No, there was a security architecture enforcing the protection of personal data, privacy, and a trust architecture supporting the development of trustworthy relationships.

The conceptual problem I found in TAS³ (and most similar projects) is the very idea of privacy and its relationship to the modalities for the protection of personal data.

While most projects related to personal data are focused on protection, the focus of my work for many years has been towards projection: how can our personal data be used to support our identity construction — and conversely. Of course protection is a prerequisite, and the type of protection we need should be in relation to our capacity to project ourselves. After all, if we have personal data online, it is not just to be hidden in a digital safe! The wrong kind of protection could lead to impeding our ability to project our data, and ourselves.

The dominant metaphors used for portraying personal data protection are the personal locker, the personal data store (PDS) and the fortress, i.e. structures where all our personal data is stored to be protected by the digital equivalent of high and thick walls.

The high and thick walls of the middle age fortress are obsolete to protect our data in a world of open metropolis

The idea of privacy as the protection of our personal data behind high and thick walls is based on the following fallacies:

- personal data is a set of attributes that can be isolated from the rest of the world

- closed, high and thick digital walls are efficient protections to operate in an open world

The first statement is a fallacy because, most data sets are shared and cannot be isolated. Most of what is considered as personal data is shared: the very same piece of medical information can be shared between the patient, the doctor(s), the nurse(s), family members, health authorities, pharmaceutical laboratories, patients lobby group, etc. At a more mundane level, a photograph of a group of friends ‘belongs’ to the one taking the picture and those on the picture, this picture then can be shared with the friends of the friends, of the… Unless we believe in enforcing what I call digital lobotomy, when the information is out, it is difficult to bring it back in.

From what precedes, the second statement is also a fallacy: our data lives in an open metropolis (which does not mean that it is necessarily public!) and cannot be located in any single point: our data is ubiquitous and shared.

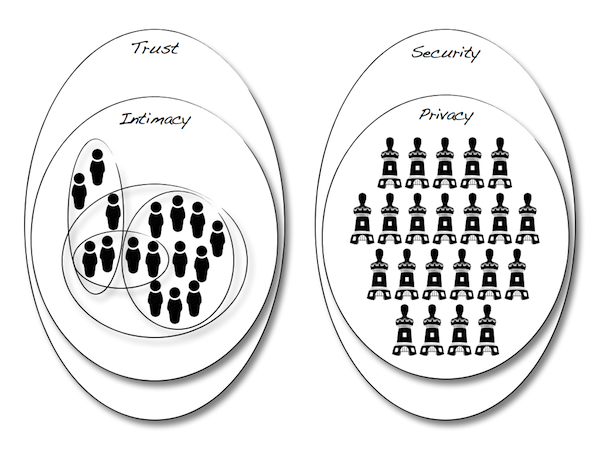

Privacy vs. intimacy

While privacy is a legitimate right, how can it be enforced if the digital equivalent of high and thick walls is a fallacy? What should we be looking for?

Since I wrote The Internet of Subjects Manifesto, I have been advocating a more potent concept than privacy to address the issue of personal data protection: intimacy. Intimacy starts with the recognition that data is shared, and the way to share it while being protected is to create a technology where each piece of data can be shared within a community while being protected form external preying eyes —a kind of communal privacy.

What metaphor could we use to describe the kind of protection/sharing mechanism for intimacy? The fortress, locker/save does not work as we would be forced to spend all of our time changing the shape of the walls of our fortresses, opening new doors, closing old ones, enforcing multiple access policies. At best, we would be creating something akin to a maze. But most likely the outcome would look an unmanageable mess.

The mechanisms for managing the different levels/circles of intimacy would make it possible for individuals to tailor with extreme accuracy the visibility of the data they want to share across the different circles of trust, from single individuals, to individuals sharing the same interests (I want to share my passion for train spotting with other train spotters, while not making it visible to the rest of the world), sharing within open and closed communities (the club of those for which emerald green is the favourite colour, the association against pet obesity, etc.).

This will be one of the directions explored with the Open Passport through the creation of a ‘badge-centric’ trust network. Not only shall we use badges and collections of badges as supports for conversations (as kinds of mailing lists) but we should explore how to use the Open Passports as a means to enabling multiple levels of intimacy.

Stay tuned!

One thought on “OpenBadges: The Deleterious Effects of Mistaking Security for Trust”